In our “Splitblog” section, we are known to take up topic suggestions from our team and often deal with them critically – even when it comes to artificial intelligence. This month I, Katha, was allowed to choose a topic myself…

If you look to the right and left these days, or even just at the smartphone in your own hand, you will more and more often feel fear and anxiety. Reports about wars, crises, inner-German and worldwide politics, attacks and other threats have become an indispensable part of the daily news. Populism in all shapes and colors influences us more than we are often aware of (our recommendation: https://www.zdf.de/show/mai-think-x-die-show/maithink-x-folge-31-populismus-100.html ). While it has been difficult enough in recent years to recognize what is fact and what is cleverly placed fiction, another challenge is now increasingly being added: Deepfakes.

WHAT ARE DEEPFAKES ACTUALLY?

Deepfakes are false reports created using artificial intelligence. These can be simple texts and articles, but also photos, audio files or videos. While (successful) image manipulation in particular used to require a great deal of expertise, it is becoming increasingly easy to generate believable fakes with the massive number of freely and freely available AI tools. Deepfakes are used specifically to spread false information – for various reasons and from various camps.

HOW DO I RECOGNIZE DEEPFAKES?

It gets really exciting when it comes to the question of how to protect yourself from falling for deepfakes. This is not so easy due to the constant, rapid improvement of the technology. If you want to test your ability to distinguish between man and machine, you can do so here, for example: https://www.humanornot.ai/. There are various, also AI-based tools that promise to unmask AI-generated content. Unfortunately, none of them work really reliably to date. So what else can you do?

CHECK FACTS:

Regardless of whether it is text, (moving) image or sound – try to assess as neutrally as possible whether the statements contained can be true and are logically conclusive. If an independent assessment is not possible, it is worth looking for further reports on the topic. It often helps to look at the alleged facts from different angles. Helpful here are, for example, www.mimikama.org, www.correctiv.org or other fact-checking portals.

CHECK SOURCES:

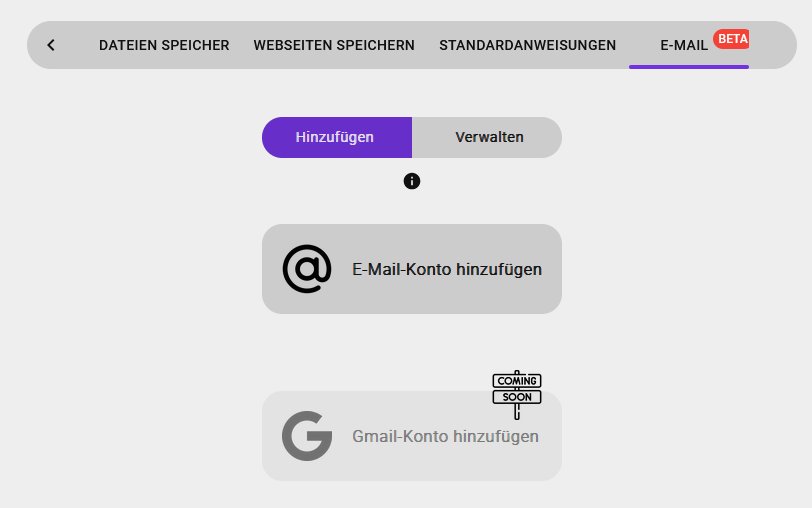

Another important indication of reliability is the origin of the respective message. Who is spreading this information? Is it a reputable media portal or is the origin unknown? (Did you know: Our chatbot KOSMO provides the sources used with every generated answer).

FIND EVIDENCE:

As with any investigation, the following also applies here: Is there evidence for the present message or the contained claims and theses? Could the person shown or quoted have been on site at all?

LOOK CLOSELY:

Especially with photos and videos, you should take a close look. At least at the moment, AI-generated images and videos are often not perfect. There are superfluous fingers, unrealistic teeth, inappropriate details, such as jewelry that appears out of nowhere. Especially with videos, the lip movements usually do not match the soundtrack or the facial expressions seem strikingly unnatural. The image background can also be very revealing. Does the perspective fit? Could the picture have been taken from this point of view? If the present image or video passes the first assessment, it is often still worth doing a reverse search – Google Lens, for example, offers the possibility of using images from the smartphone for the Internet search. Alternatively, the URL of the image can be entered into any search engine. Often you come across the original photo that was used to create a fake video. If the message is about a big event, you can assume that you will find more pictures – after all, almost everyone has a smartphone with a camera these days.

OUR CONCLUSION:

We will all be confronted more and more often with increasingly credible deepfakes in the future. It is all the more important to prepare for this and to know how to recognize deepfakes.

MORE ON THE TOPIC:

Further information on the topic can be found, for example, in the #Faktenfuchs section of the BR or at klicksafe.de. The zdf has also dedicated an episode of the logo! series to the topic and treated the topic in a way that is suitable for children and young people.